I wrote yesterday's blog piece on

Cargo Cult system administration as an intro to this post. It's essentially a warning - ignore the complexities of your systems at peril. So if you haven't read it yet, read it first before reading this entry.

Two days ago I was alerted to the fact that one of the FTP servers I had setup for a client had stopped working. This was a worry for a couple of reasons: Firstly, the server is quite simple, there's nothing that should go wrong with it; Secondly, this is the second time we've had a problem with this server this year (a unique issue which deserves its own blog entry). Two problems with a critical but simple server does not inspire confidence in a client.

As I said, the server was very basic: It's a stripped down CentOS 7.5 server running only sshd, proftpd and webmin. It's also well hardened - exceeding best practice.

I quickly checked all the basics and found the server was running normally. No recent server restarts and no updates to anything critical and certainly nothing matching the timeline given by the client when the server "stopped working". Nothing in any log files to indicate anything other than ftp login failures that started exactly when the client indicated. No shell logins to indicate intrusion or tampering. Config files have not been changed from the backup config files I always create. The test login I was given failed even after changing the password.

At the suggestion of a colleague, I changed the shell account from /sbin/nologin to /bin/bash. I indicated this wouldn't be the issue as this is something I routinely do for accounts that don't need ssh access and this was the way the server had been setup from the beginning.

However, changing the default shell did the trick!

???

I changed the default shell for all the ftp accounts and we were working again. But why? How?

The problem was now fixed and I could have easily left it at that cargo cult sysadmin style. It would have been easy to say "bug in proftpd" or something like that.

The purpose of the /sbin/nologin shell is to allow accounts to authenticate to the server without shell access. When you establish an ssh session, your designated shell is run. These days it is usually bash but it can be anything csh, ksh, zsh, tcsh etc. There are oodles of shells. The nologin shell is a simple executable that displays a message saying the account is not permitted shell access and exits. Simple, yet effective. The list of acceptable shells is located in /etc/shells which most sysadmins don't bother editing, they leave it at defaults unless an unusual shell is used. It includes bash and nologin.

I checked the PAM entry for proftpd. The PAM files lists the requirements for successful authentication to each service. It's very powerful and quite granular. The PAM file for proftpd contained:

This was expected: Proftpd authentication is by system password except for users in the deny list and requires a valid shell as defined by the pam_shells.so module. This checks /etc/shells for a valid shell. So, checking /etc/shells:

Huh? /sbin/nologin is absent! I checked the datestamps for /etc/shells and /sbin/nologin - the datestamp is Oct 31 - Just over a month ago????

This doesn't seem to add up. I interrogate the yum database to see if there have been any changes at all with proftpd or util-linux (which supplies both of the files). Yum doesn't show any modification even though the file dates don't match the repo.

Outside of an intrusion, what could modify a this file? It took some digging to find a potential culprit:

SCAP (Security Content Automation Protocol).

The installation of CentOS/RHEL provides the following option under the heading "Security Policy":

This is where cargo cult system administration rears its head. The usual maxim is don't install or configure something you don't understand. If you do need it, make sure you understand it.

This screen is a front-end for scripts to ensure compliance with various security policies. It's dynamic and is designed to react to security threats in real-time. It's a powerful tool. It's also horrendously complex and something of a black art. Acknowledging this, the open-scap foundation advise:

There is no need to be an expert in security to deploy a security policy. You don’t even need to learn the SCAP standard to write a security policy. Many security policies are available online, in a standardized form of SCAP checklists.

Very comforting. Don't look to closely, just check the box and forget we exist. Ignore the

Law of Leaky Abstractions and allow the script to take care of your security policies.

Notice the above screen shows the default contains no rules. This is from the CentOS 7.2 installation. From the

CentOS wiki on Security Policy:

Previously, with the 7.3.1611 ISOs, we knew that all 4 of the STIG installs produced an sshd_config file that would not allow SSHD to start. This was an upstream issue (Bug Report

bz 1401069). This issue has been fixed with the 7.4.1708 ISOs and all installs produce working SSHD now.

Security profiles "Standard System Security Profile" and "C2S for CentOS Linux 7" can't be used in the CentOS 7.5.1804 installer. A bug causes the installer to require a separate partition for /dev/shm, which is not possible. RHBZ#1570956

So until CentOS 7.4, you couldn't use SCAP without having a broken system. That pretty much ensures it didn't get used.

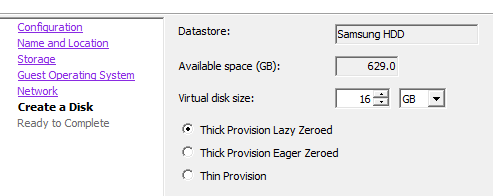

For this server - being CentOS 7.5 - I'm pretty sure I chose the "Standard System Security Profile" thus becoming (in this instance) a cargo cult sysadmin. I selected an option I didn't fully understand the consequences of: It had nice sounding name, the description sounded kewl and it seemed this was the 'real' default. I remember looking up the definition and found it was a set of 14 very basic rules for sshd and firewalld security. What could go wrong?

What indeed.

It wasn't long before I found

CVE-2018-1113. To quote the important part:

setup before version 2.11.4-1.fc28 in Fedora and Red Hat Enterprise Linux added /sbin/nologin and /usr/sbin/nologin to /etc/shells. This violates security assumptions made by pam_shells and some daemons which allow access based on a user's shell being listed in /etc/shells. Under some circumstances, users which had their shell changed to /sbin/nologin could still access the system.

The latest modification date on the CVE was Oct 31 - the same datestamp that I found on /etc/shells and /sbin/nologin. this looked curiously like a smoking gun.

At some point, this CVE translated into a Red Hat security advisory

RHSA2018-3249.

Time to check the SCAP definitions:

This is curiously close to the date that ftp stopped working. This is also as far as I could get forensically. I'm not sure how the scap definitions updated, I assume that SCAP proactively fetches them and then applies them. This makes sense if SCAP is supposed to be dynamic responding to security advisories in real-time. That was the bit I overlooked in the description - if anything operates in real-time then it follows that it must have its own independent update mechanism.

After submitting my report I was asked if we should abandon SCAP. Again, the cargo cult administration reaction would be to say "yes". However, after some careful thinking I responded with "no". Applying the five whys leads to the appropriate conclusion:

Problem: FTP not working.

1st Why: Users could not login.

2nd Why: PAM authentication failing

3rd Why: /sbin/nologin not listed in /etc/shells

4th Why: Security policy update removed /sbin/nologin from /etc/shells

5th Why: Use of /sbin/nologin is subject to security vulnerability

The use of /sbin/nologin was my choice to prevent shell access, however my attempt to harden to system by denying ssh login for users was outside of the security policy. The problem was the use of /sbin/nologin in the first place (an old practice) rather than the preferred method of modifying the sshd config or placing a restriction within PAM.

The lesson (for me) is pretty sobering: If you intend to use best practice, use ONLY best practice, particularly with security policy. System modifications are often contain leaky abstractions and are only tested against best and default practice. If you choose to step outside that box, make damn sure you know and understand the system and its consequences fully.

And don't check boxes you don't fully understand. Even boxes that promise to magically summon system security from the sky.